- #INSTALL APACHE SPARK WINDOWS HOW TO#

- #INSTALL APACHE SPARK WINDOWS INSTALL#

- #INSTALL APACHE SPARK WINDOWS SOFTWARE#

#INSTALL APACHE SPARK WINDOWS INSTALL#

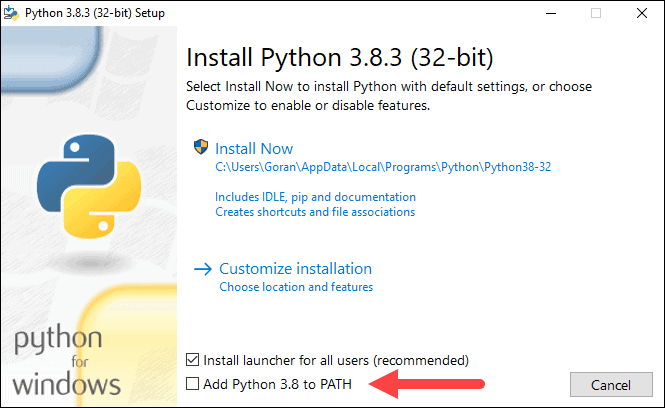

Download and install Scala version 2.10.4 from here only if you are a Scala user otherwise this step is not required.The benefit of using a pre-built binary is that you will not have to go through the trouble of building the spark binaries from scratch.(You can unzip it to any drive on your computer) Once downloaded I unzipped the *.tar file by using WinRar to the D drive.I chose Spark release 1.2.1, package type Pre-built for Hadoop 2.3 or later from here. Download a pre-built Spark binary for Hadoop.If you are not a python user then you also do not need to setup the python path as the environment variable If you are a Python user then Install Python 2.6+ or above otherwise this step is not required.To install Spark on a windows based environment the following prerequisites should be fulfilled first. And finally, I was able to come up with the following brief steps that lead me to a working instantiation of Apache Spark.

#INSTALL APACHE SPARK WINDOWS HOW TO#

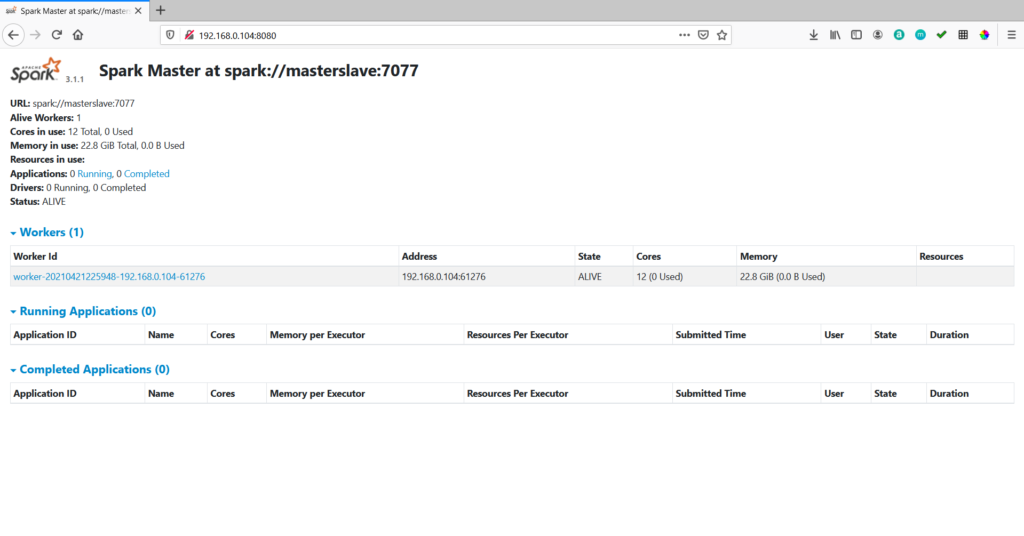

I invested two days searching the internet trying to find out how to install and configure it on a windows based environment. But I wanted to get a taste of this technology on my personal computer. However, they are using a pre-configured VM setup specific for the MOOC and for the lab exercises. In order to learn how to work on it currently there is a MOOC conducted by UC Berkley here. I will try to solve.Apache Spark is a lightening fast cluster computing engine conducive for big data processing. If have any errors installing the Spark, please post the problem in the comments. To create a Spark project using SBT to work with eclipse, check this link. If everything is correct, you will get a screen like this without any errors. To run spark, Open a Command Prompt(CMD) and type spark-shell. Now, edit the path variable to add %SPARK_HOME%\bin path.

It is same as setting the HADOOP_HOME path. Now, we have to set SPARK_HOME environment variable.After extracting the files, create a new folder in C drive with name Spark ( or name u like) and copy the content into that folder.

#INSTALL APACHE SPARK WINDOWS SOFTWARE#

So, use software like 7zip to extract it.

If you dont have scala installed in your system, Download and install scala from the official site.In the path environment variable, click on new and add %HADOOP_HOME%\bin in it, as shown in the picture below. Now, select path environment variable which is already present in the list and click on edit.And, add HADOOP_HOME as shown in the pic. Now, open environment variables and click on new.Inside the winutils folder create another folder named bin and place the winutils.exe. Now, create a folder in C drive with name winutils.To run spark in windows without any complications, we have to download winutils.Step 2: Downloading Winutils.exe and setting up Hadoop path If you dont have java installed in your system, download the appropriate java version from this link.

You must have java installed in your system. Spark is an in-memory cluster computing framework for processing and analyzing large amounts of data.